Your Help Scout Agents Shouldn't Need to Open Three Other Tabs

Published on April 22,2026

Move the context to the ticket, not the agent to the tabs.

Part 1 of 3 in the Pre-LLM Patterns series. See the series intro for the through-line.

A few years back I was reviewing a support workflow with a client who runs a portfolio of dropshipping storefronts. All support across every brand funnels into one shared Help Scout instance. I sat behind one of their agents for thirty minutes, just watching the mouse.

Every single ticket, same dance. Open the ticket. Read the customer's message. Alt-tab to the shipping tracker. Paste the order number. Wait. Alt-tab to the storefront admin. Look up the customer's address. Alt-tab to an internal order database. Check if the shipment was flagged. Alt-tab back to Help Scout. Start typing.

I clocked it at forty seconds of context-loading before she'd even started a reply. Multiply that by hundreds of tickets a day across eight languages and the client was essentially paying a support team to be browser tabs.

We fixed it by flipping the direction of the problem. The context didn't need to move to the agent. The agent's tools needed to stop being the place the context lived.

The setup

The stack, roughly:

- Help Scout for the inbox, split into eight per-language mailboxes (EN, FR, DE, ES, IT, NL, PL, BR)

- A multi-carrier shipping API on the backend, auto-detecting carrier from tracking number across 40+ carriers

- An internal MySQL database holding the authoritative order, shipment, and checkout records

- An embedded "order status" widget running on every brand's storefront, connected to all of the above

The customer-facing flow was already good. Type a tracking number, get a translated status, hit a quick FAQ if your state had one ("your order is in transit, estimated 3-5 days"). Only a small fraction of users actually escalated to "I need a human."

But when they did, the ticket that showed up in Help Scout was naked. Just the message body and the customer's email. Everything the agent needed to respond intelligently lived in three other tools.

The wrong fix

The first instinct most teams have here is to train agents to switch faster, or to build a "support dashboard" that pulls from all three systems. Both are valid improvements. Both miss the point.

Training is a per-agent cost that never amortizes. Hire a new agent, reteach the dance. An aggregation dashboard is a fourth tool to open, which is progress in some metaphysical sense, but you're still opening something.

The move is not "make context-switching faster." The move is "don't context-switch."

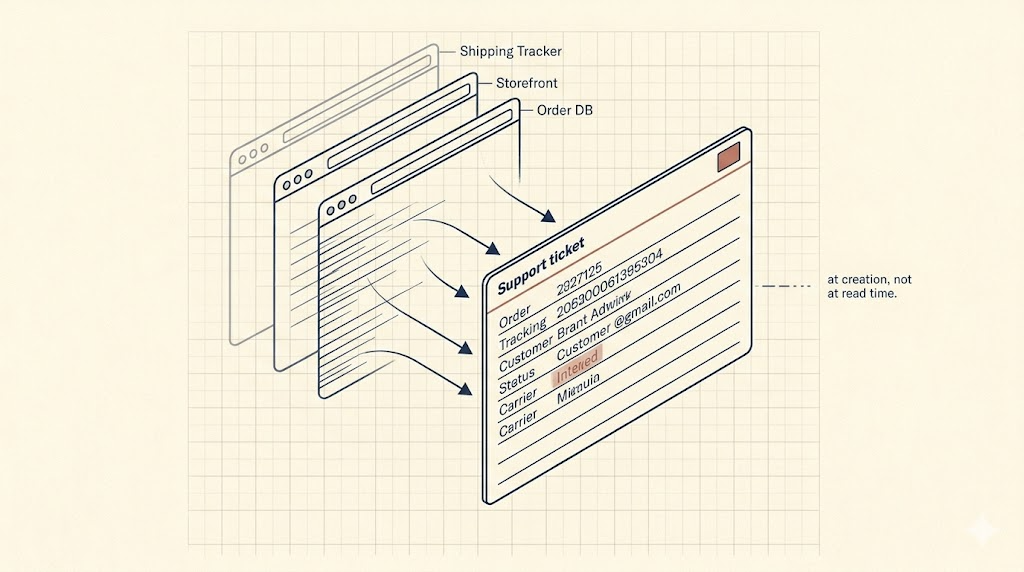

At creation, not at read time

The principle fits in one sentence. Enrich the ticket when it's born, not when it's opened.

When a customer escalated from the order status widget, we already knew more about them at that moment than the agent would ever dig up later. The widget had pulled shipment state, carrier, order history, address, phone, and locale by the time the customer clicked "I need help." Instead of forwarding just the typed message into Help Scout, we forwarded everything we knew.

The Help Scout conversation got created with:

- Customer email and name, pulled from the order record so the agent never had to ask

- Full order context in the body: tracking number, carrier, current shipment state, order ID, product, shipping address, phone

- A locale field so the agent knew what language to reply in

- A source-brand tag so the agent knew which storefront this came from, without having to check the email signature

- Routed to the correct per-language mailbox at create time, not shuffled around after

Pseudo-code for the create call looked like:

POST /v2/conversations

{

"mailboxId": mailbox_for_locale(locale),

"subject": customer_subject,

"customer": { "email": customer.email, "firstName": customer.first_name },

"type": "email",

"status": "active",

"tags": [source_brand, "livechat"],

"threads": [{

"type": "customer",

"text": format_context_block(order, shipment, tracking, message)

}]

}

The interesting bit is the format_context_block function. That's where all the cross-platform enrichment happens. By the time the conversation is born, it already has what the agent needs.

From the agent's seat

Before: open ticket, see a message like "where is my order??", alt-tab three times to figure out what order they meant.

After: open ticket, see the message followed by a structured block: Order: #A-4421 | Product: [name] | Carrier: [name] | Status: In Transit (8 days elapsed, SLA 14) | Shipping to: [address]. The agent types a reply. No alt-tabs.

We measured average handle time before and after. I won't share the numbers because the build's under wraps, but the delta was big enough that the same team handled a higher ticket volume through a holiday season without overtime.

One gotcha

If I were rebuilding this today, I'd lean harder on Help Scout's typed custom fields rather than stuffing everything into the thread body. Custom fields are sortable, filterable, reportable. Body text is not. A few fields like order status, source brand, and tier unlock reporting and routing that free-form text can't.

Second smaller thing: send the routing decision (which mailbox) to the create call, not a follow-up move. Moving conversations after creation is fine for a handful. At volume it burns API calls and leaves a gap where the ticket is briefly in the wrong place.

Why this matters more now, not less

This build predates the current LLM wave. It's a deterministic, boring, context-enrichment pattern with zero generative AI in the loop. It ran for years on that stack.

Here's the catch, though. The exact same pattern is table stakes for any LLM-drafted response pipeline. If you want a model to draft replies, it needs the same context the human agent needed. Not "sort of." Exactly. Missing context doesn't politely degrade the draft. It makes the model hallucinate one.

The context layer you build for your agents is the context layer your AI needs. Build it once, staff it twice.

Teams bolting a response-drafting LLM onto a ticket-only context layer are getting poor drafts and blaming the model, when the actual failure is upstream. The LLM didn't read the order database. Nobody plugged it in.

Takeaway

Before investing in AI-drafted replies, pre-generated suggestions, "co-pilot for support," or any of the newer shiny layers, check what lives on the ticket at the moment it's created. If your best agent would still have to open three other tabs to do the job, the AI will too.

The pattern is older than LLMs. It's going to outlast them.

If your agents are still opening three other tabs on every ticket, or you'd like this exact context-enrichment pattern wired into your Help Scout (or Zendesk, or Intercom) setup, book a discovery call with me.

Other pieces in this series