Rule-Based Triage Is Having a Quiet Comeback

Published on April 22,2026

Or: why I'd still classify support tickets with if-statements before letting an LLM near them.

Part 3 of 3 in the Pre-LLM Patterns series. See the series intro for the through-line.

Everyone's reaching for an LLM to triage support tickets right now. Classify by intent, route to queue, auto-respond on the simple ones. Seductive. Fast to prototype. Demos beautifully.

A few years back I built a triage layer for a client running a portfolio of dropshipping storefronts. Pure if-statements against a shipping-status enum. Seven buckets, one switch statement.

It closed about 60% of their tickets without a human touching them.

Nothing's happened in the intervening years to convince me the switch statement was the wrong approach. If anything, the LLM era has made rule-based triage more valuable, not less.

The problem

On the client's support page, the single biggest ticket category was, predictably, "where is my order?" Customers would land on the support page, type a tracking number into the order-status widget, and then immediately send a message asking where their package was. The human agent would look up the same tracking number the customer had just typed. Answer. Close. Next ticket.

Multiply by thousands of tickets a month. Multiply again by eight languages. The team was drowning in tickets that could be answered by the system that had just delivered the customer to them.

The wrong fix

The instinct, especially in 2026, is to throw an LLM at the routing problem. Classify the intent ("shipping question", "refund request", "product defect"), route accordingly, maybe auto-respond on the easy ones.

Three problems with that approach.

First, LLM routing is non-deterministic. The same ticket text gets classified as "shipping question" on Monday and "general inquiry" on Thursday because the upstream model had a slightly different day. Support routing rules can't have moods.

Second, it's hard to test. Rule-based classification gets a fixture file and a CI run. You know exactly what the router will do on 2,000 historical tickets. An LLM router gets vibes and a prompt you tweaked last Tuesday.

Third, it's slower and more expensive. One LLM call per incoming ticket, to answer a question that a switch statement answers in microseconds at zero cost.

The real failure mode: LLM-as-router mis-classifies "refund" as "general inquiry" exactly once, and now someone's in your legal team's inbox asking why a customer got a cheery FAQ about shipping when they were trying to cancel.

The right skeleton

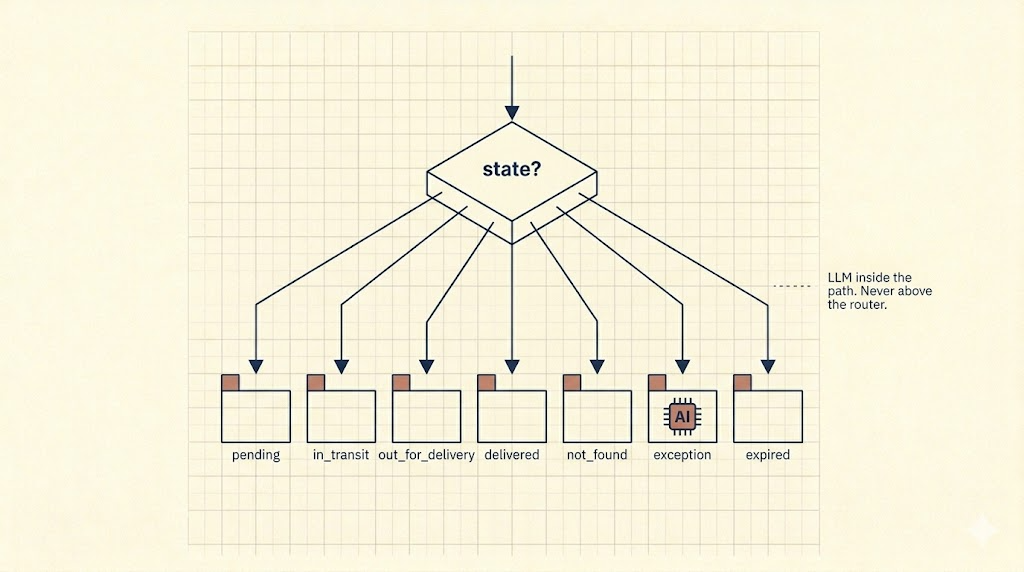

The shape is old and boring. Classify on structured state you already have. Each bucket gets a path. Only escalate on explicit signals.

For this client, the structured state was shipping status. The shipping API already gave us an enum:

pending: carrier hasn't scanned the package yetin_transit: normalout_for_delivery: should arrive todaydelivered: carrier says deliverednot_found: tracking number doesn't resolveexception: something went wrong at customs, weather, etc.expired: too old, carrier purged it

Seven states, seven topic menus, seven FAQ sets templated with the customer's actual order data. That's the whole router:

route(tracking_status):

switch tracking_status:

case "pending": return topics.pending

case "in_transit": return topics.in_transit

case "out_for_delivery": return topics.out_for_delivery

case "delivered": return topics.delivered

case "not_found": return topics.not_found

case "exception": return topics.exception

case "expired": return topics.expired

default: return topics.generic

A "delivered but the customer says they didn't receive it" FAQ for the delivered bucket. An "it's in transit, here's what the carrier timeline looks like" FAQ for in_transit. A "the carrier hasn't scanned it yet, that's normal under 24 hours" for pending. Each FAQ was pre-filled with the customer's actual tracking number, product, carrier, and expected delivery window.

A ticket only opened when there was a real signal that a canned answer wouldn't do:

- Customer uploaded an attachment (photo of a damaged product, screenshot of an error)

- Customer typed free text that didn't match any known topic

- Customer clicked a hard-routed topic (refund, wrong address, "package never arrived")

Everything else got answered by the system and never touched a human.

One gotcha

The biggest failure mode wasn't the router. It was the FAQs.

A templated answer that says "your package is in transit, hang tight" feels fine when the carrier scanned it yesterday. It feels insulting when the last scan was three weeks ago and the customer is already angry.

The fix: add guards inside the path. in_transit + last_scan_age > SLA is a different topic than plain in_transit. Same router, richer state. Escalate to human on the degraded sub-states rather than shipping a tone-deaf FAQ.

This is the small detail that separates a router that works from one that gets blamed for bad customer experience.

Why this matters more now, not less

The LLM era hasn't replaced rule-based triage. It's made rule-based triage the right substrate for LLMs to sit on top of.

The better arrangement looks like this. Rule-based router picks the path. LLM sits inside the path, taking the templated FAQ and softening it for the specific phrasing of the customer's question. The model's job is voice and empathy. The router's job is answering "which bucket are we in."

Why this wins:

- Deterministic routing is testable and auditable

- You never misroute a refund request into a shipping FAQ

- LLM-drafted paraphrasing stays bounded to the canonical answer for the bucket

- Per-ticket cost stays low because the LLM is doing 30 tokens of rewording, not 500 tokens of classification plus reasoning

- When a prompt regression happens, the blast radius is the tone of one path, not the routing of the entire inbox

Takeaway

If you have deterministic state available (shipping status, subscription tier, account value, onboarding step, billing state), triage on that first. Let the LLM handle the part it's genuinely good at, the phrasing and the nuance, inside a bucket that's already been picked.

The newer the tool, the more the old skeleton matters.

An LLM at the top of your routing pipeline is a liability. An LLM inside a well-routed path is a feature.

If you'd like a rule-based triage layer built on top of your support stack, or your current LLM-routed setup audited for the classic failure modes, book a discovery call with me.