Three Pre-LLM Patterns I Keep Rebuilding in the LLM Era

Published on April 22,2026

A mini-series on support automation that predates LLMs and now matters more because of them.

In 2019 I built a support-automation stack for a client running a portfolio of dropshipping storefronts. One centralized Help Scout instance, eight languages, hundreds of tickets a day, zero AI in the loop. It was all switch statements, lookup tables, and event-driven ticket enrichment.

The build ran cleanly for years. What I didn't expect was how often I'd catch myself, once LLMs arrived, recommending the same patterns to clients who assume the answer to every problem in 2026 is "ask the model."

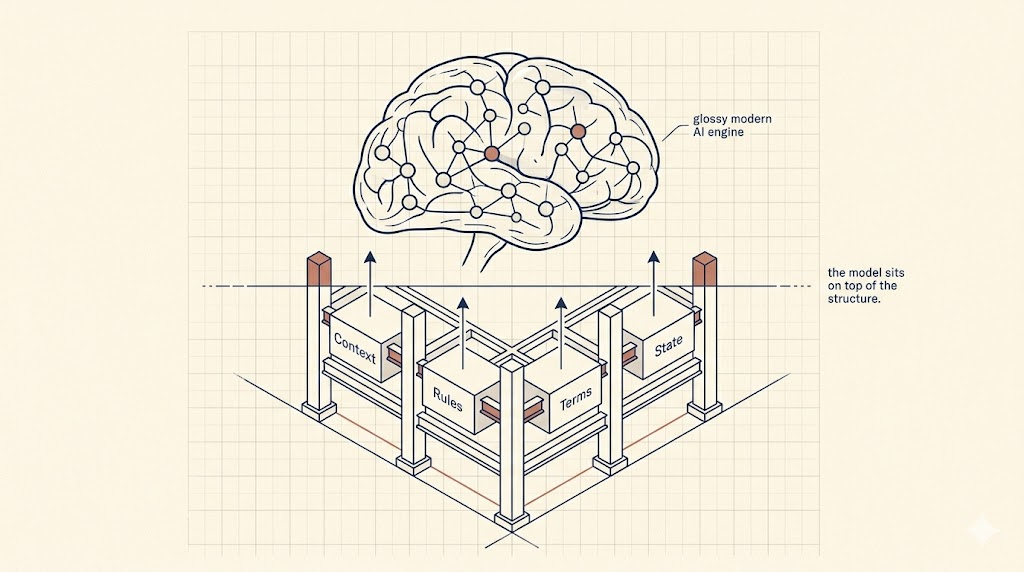

It usually isn't. The model sits inside a structure you still have to build. And the old boring patterns (routing on deterministic state, enriching records at creation, substituting curated terms into generation output) are the structure.

This is a three-part series on three of those patterns. Each one is pre-LLM. Each one is more valuable now than it was in 2019.

Part 1: Your Help Scout Agents Shouldn't Need to Open Three Other Tabs

An agent was opening three other tools on every single ticket to reconstruct context that already existed elsewhere in the stack. We fixed it by enriching the ticket at creation, not at read time. The agents stopped tab-switching.

Years later, the same context layer is what any LLM-drafted reply pipeline needs. An LLM with no context writes the same bad drafts that an agent with no context reads.

Part 2: A Termbase Overlay, the Oldest Brand-Voice Guardrail That Still Works

Google Translate was mangling carrier names and product phrasing in customer-facing emails across eight languages. The fix was a 200-row lookup table and thirty lines of string replacement around the translation call.

LLMs mangle the same things (brand names, SLAs, trademarks) just with better grammar. The lookup table is still the cheapest guardrail you can put on any generation engine.

Part 3: Rule-Based Triage Is Having a Quiet Comeback

Everyone's reaching for an LLM to triage support tickets right now. The rule-based approach (classify on structured state you already have, route to a pre-canned topic menu) is faster, cheaper, testable, and won't misroute a refund request into a shipping FAQ on a bad day.

The LLM belongs inside the routed path, softening the templated reply. Not above the router, classifying what bucket to put things in.

The through-line

Three patterns. One thread.

In each case, an LLM can do more than a deterministic system could on its own, but only when it sits on top of a deterministic skeleton. The skeleton decides what's true: what state the order is in, what the approved brand phrasing is, what bucket the ticket belongs in. The model decides how to say it.

Skip the skeleton and the model does both jobs. That's where the bad demos come from.

These three patterns predate LLMs. Each one is older than the current model, and will be older than the next one.

That's the claim, anyway. Read them in order and tell me if I'm wrong.

If you're building (or untangling) a support stack and want a second pair of eyes on where the skeleton goes before you add the model, book a discovery call with me.